Between the Guardrails: GenAI adoption rises. Guardrails and visibility trail behind.

Generative AI is no longer experimental. It is already part of everyday work in most organisations. The growing problem is – the guardrails and training needed to make GenAI safe are not keeping up.

.jpg)

.jpg)

What’s in this issue?

Generative AI is no longer experimental. It is already part of everyday work in most organisations. The growing problem is – the guardrails and training needed to make GenAI safe are not keeping up.

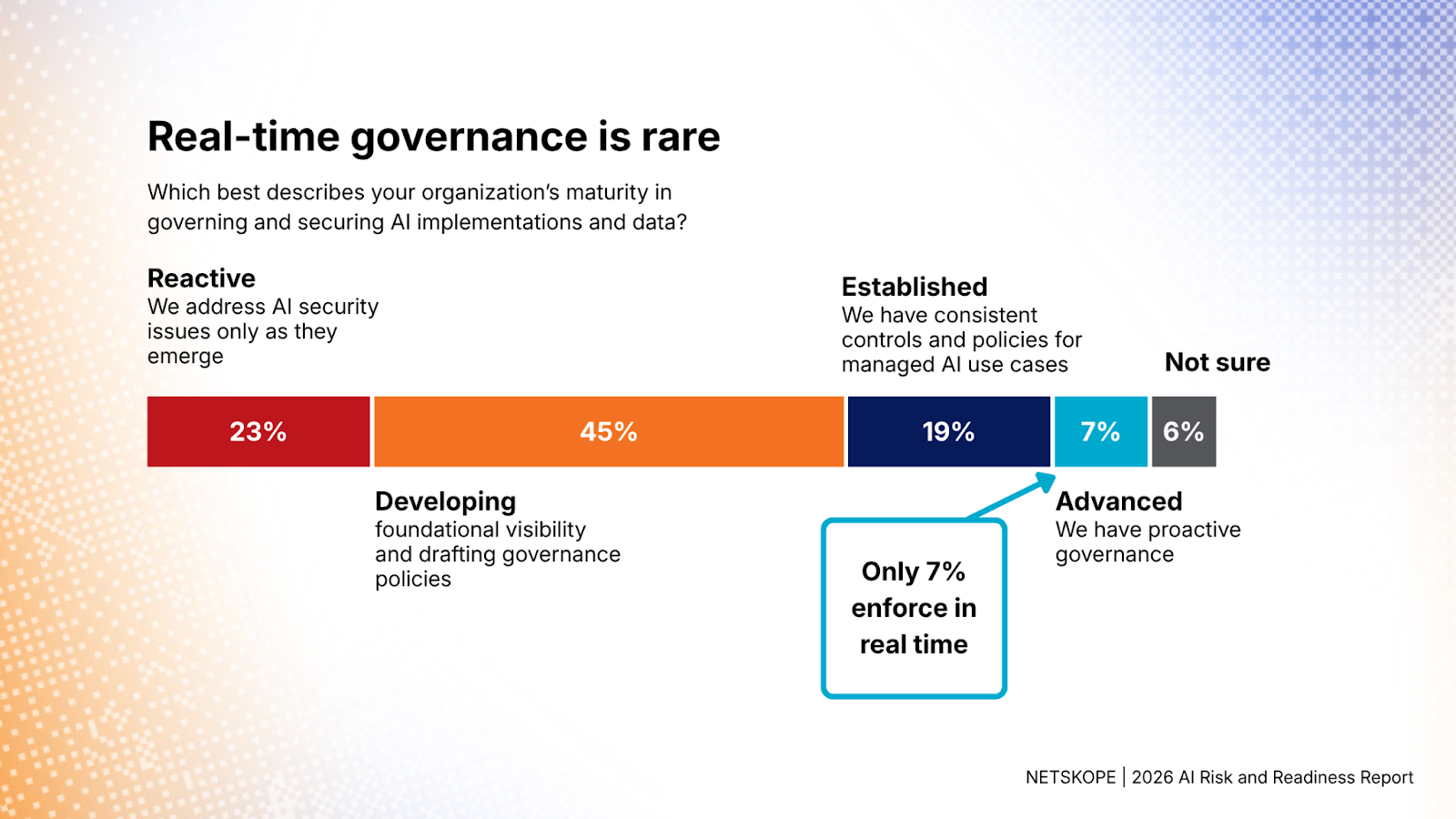

In this latest issue of Between The Guardrails, we look at the widening gap between AI adoption and governance, drawing on fresh research from Netskope. And the gap is getting hard to ignore: the report has found that only 7% of industry experts surveyed enforce GenAI policies in real-time.

If you want a clear picture of where GenAI stands today – and a few hints on how to make it work for you – read on.

AI adoption outpaces governance

AI is moving into everyday work faster than most organizations can govern it. Earlier this year Netskope conducted a survey of 1,253 cybersecurity and IT professionals, in order to figure out the current state of AI security in organizations. We have summarized the key points.

Organizations have embraced GenAI, but the guardrails lack behind

Netskope’s 2026 AI Risk & Readiness Report found that 73% of organizations already use AI, but only 7% enforce policies in real time. Most still rely on written policies alone (31%), while 11% have no enforcement at all.

👉 Why this matters: information can easily slip through the cracks. When a vast majority of people in your organization use GenAI, the crack becomes more like an open door for data leaks.

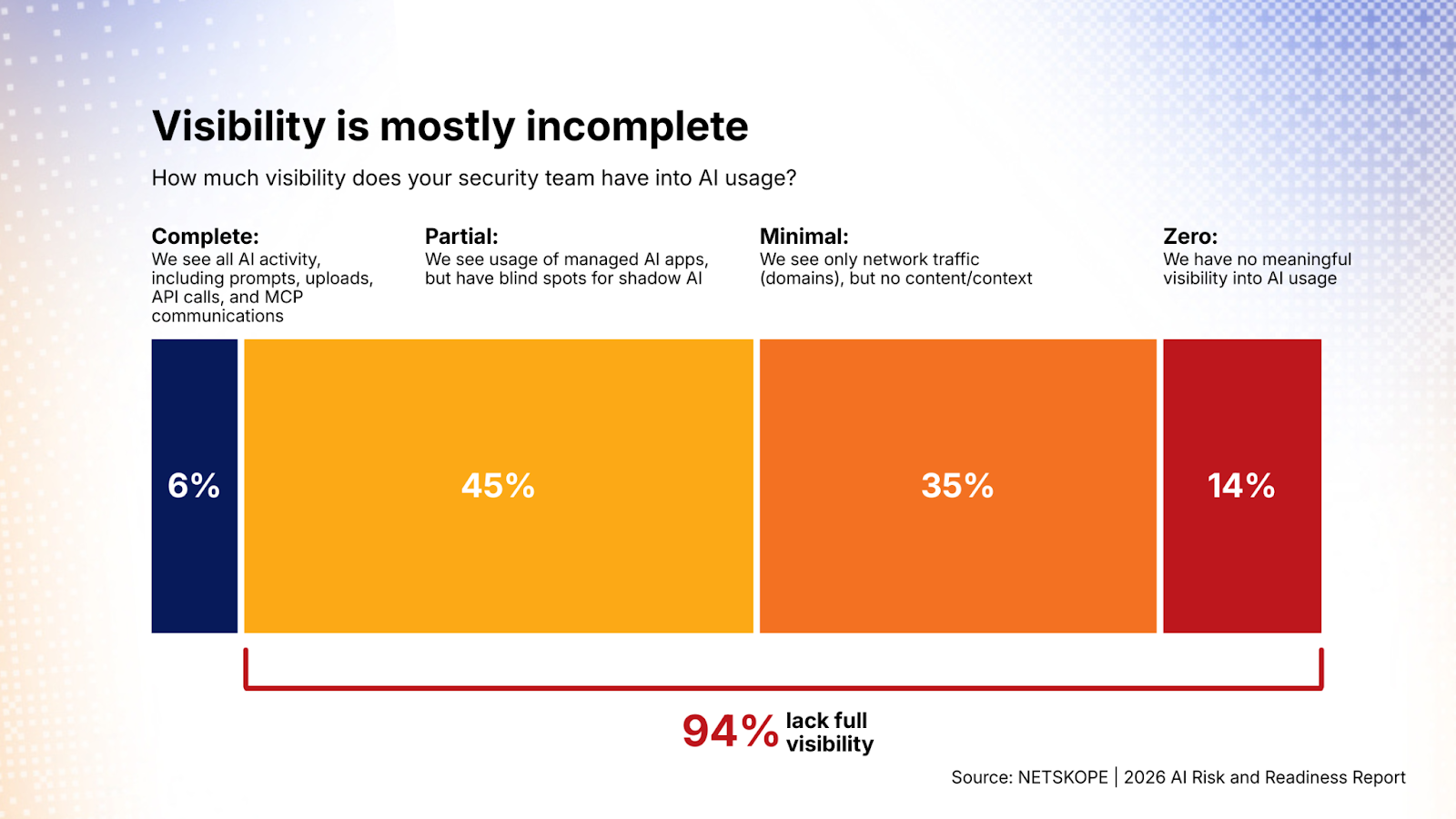

Majority of security teams lack visibility of AI usage

The report has also shown that 94% of security teams surveyed lack full visibility of AI usage in their organizations. The plurality of the respondents (45%) said they have only partial visibility, meaning they see usage of managed apps, but have no measures to manage shadow AI, with only 6% actually having a complete visibility into AI activity, meaning that they see prompts, uploads, API calls and MCP communications.

👉 Why this matters: You cannot stop what you cannot see. Lacking full visibility prevents security teams from protecting their organizations from data leaks, as well as preventing compliance to make sure they follow the policies accurately.

Did you know: During Q1, our customers on average have stopped 177 instances of PII for every 100 prompts from entering GenAI apps.

Shadow AI is not monitored

Since there is a lack of visibility in GenAI usage, the report also shows that majority (88%) of the respondents say they cannot distinguish personal AI accounts from corporate ones, amplifying the issue of possible data leaks.

Why this matters: A lot of the free versions of GenAI apps use the data for model training, which leaves companies extremely exposed.

Curious to learn how to secure GenAI app usage, while increasing productivity in your organization? Watch our latest on-demand webinar on Productivity-First Governance.

In short

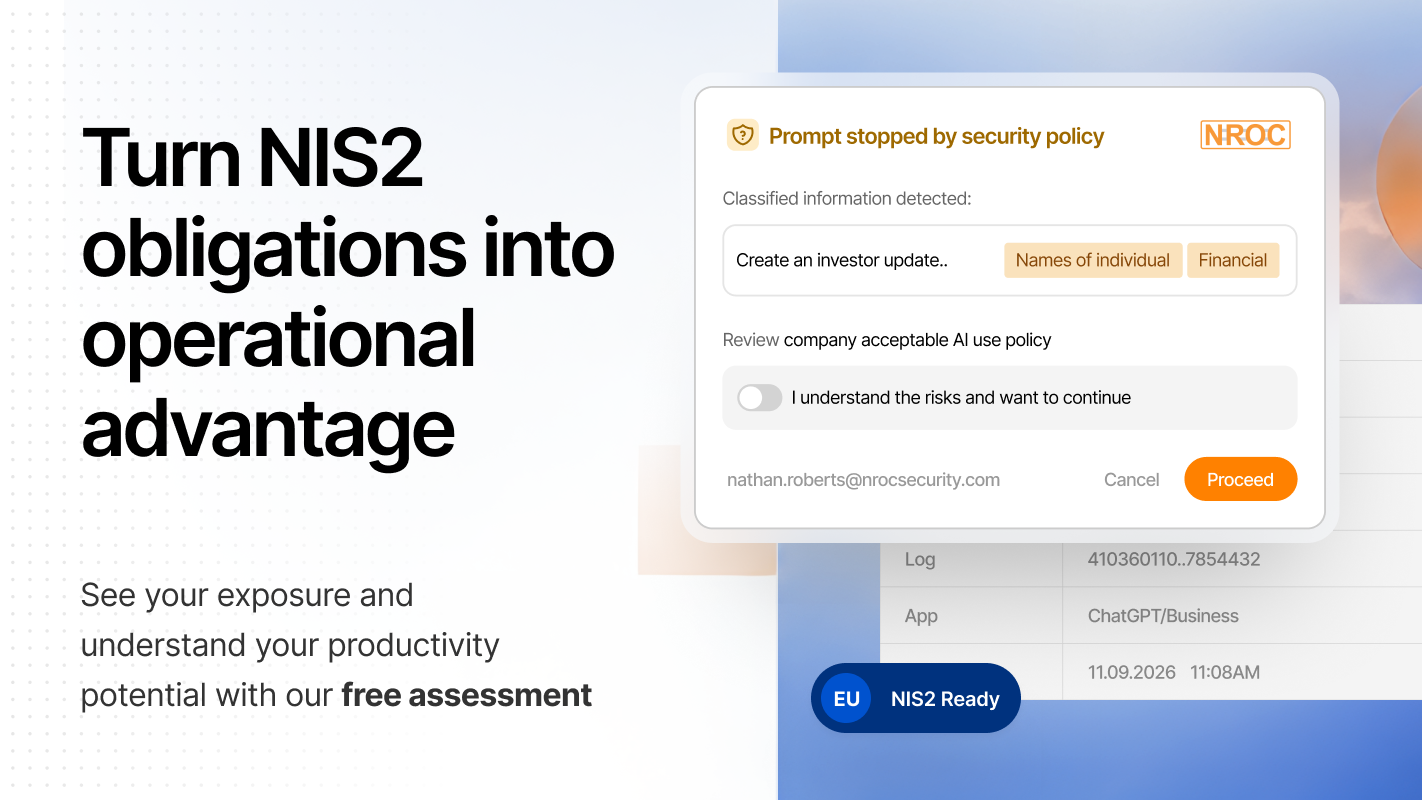

👉 The scale of the risk is clear: Across our customer base, PII or classified content is redacted an average of 4,700 times every month per 1,000 employees – a strong signal that effective guardrails are not optional. As pressure builds to move faster with AI, security teams need guardrails they can deploy just as quickly. This is exactly where NROC Security shines – it's deployed in days, with a network-based architecture that avoids plugins and extensions and keeps operations light.

What's new with NROC

Updated CISO guide for GenAI security

As Netskope report has shown - GenAI has changed the cat-and-mouse game in cybersecurity. Enforcing policies with prompts can only happen at the moment of prompting, so to get ahead the security teams need to shift-right.

We have recently released an updated CISO guide for GenAI security, focusing on shift-right techniques, aimed to help security professionals to adapt to the ever changing GenAI threats.

👉 Recommended reading for teams working out how to implement GenAI securely.

Partnership and market momentum

We’ve partnered with Northamber to expand access to secure GenAI through the channel. Qualcom is also investing €500,000 in a new AI security practice, with NROC Security included as a provider.

👉 Both are signs that demand for practical, enterprise-ready AI security is growing.

Product releases

Latest product features:

- Microsoft Copilot log sync now available for all users

- Microsoft Sentinel push log export is now available

- It is now possible to enable SSO only login with NROC Management Console.

Want to learn more about our product? Watch our short on-demand demo, or book a demo, to get a more in-depth understanding of what NROC Security can do.

Final thoughts for this issue

GenAI is already changing how people work.

The organizations that benefit the most won't be the ones that throw the most GenAI tools at their employees, but the ones that combine adoption with clear guardrails. Only with proper controls can GenAI truly become a real driver of productivity, without compromising security.

That is exactly what we aim to help organizations achieve at NROC Security: harness the power of GenAI with confidence, while building a secure adoption at scale.

Watch our short on-demand demo, or book a demo, to get a more in-depth understanding of what NROC Security can do.

.png)